DMSO, AI, and The Great Transformation of Information

March's Open Thread

I feel one of the greatest issues in healthcare (which is a reflection of the society at large) is that things are so rushed that there isn’t time for doctors to connect with their patients. Because of this, a lot of the most critical parts of medicine get missed, and I’ve known so many patients who were harmed by the medical system because of the 15 minute visit model. As such, my original goal here was to connect with everyone who reached out to me, but now, due to the amount of work I feel obligated to put into the articles and just how many people contact me with urgent questions, that’s no longer possible.

I eventually decided that the best option I had was to try to address as many potential questions as possible in the articles post monthly open threads where anyone could ask what they wanted to, as that way I could efficiently get through the pressing questions I was not able to answer throughout my articles and then pair those threads with a topic that didn’t quite merit its own article.

For this month’s open thread, I wanted to talk about a topic that’s becoming an increasingly important part of our lives—artificial intelligence —given how much I’ve interacted with it over the last six months while researching DMSO.

Note: this article builds upon a previous one (the Great Vanishing of Information), which highlights how certain types of information are being buried on the internet unless you know how to find it.

The DMSO Project

DMSO is one of the only therapies I know of which:

•Treats a wide range of common ailments.

•Frequently is able to treat conditions often otherwise viewed as “incurable.”

•If used correctly, it has a very high safety profile.

•Has extensive scientific literature corroborating its safety and efficacy.

•Is easily available and highly affordable.

Unfortunately, DMSO has languished in an unusual spot. It was widely used throughout the pharmaceutical industry, a component in many drugs and was the active agent in a few FDA approved therapies but simultaneously, despite decades of protest (including by Congress) and being widely used around the world, the FDA essentially banned DMSO from medicine in America, and few even know about it (demonstrating that medical policies is often the result of politics rather than science).

Because of this, I felt it was worth publicizing the mountain of forgotten literature showing DMSO worked, and once I did so, many people reported profound improvements and disbelief that something like DMSO existed, which they had never heard of (resulting in those articles receiving millions of views and thousands of readers sending in remarkable reports of what DMSO did for them). This took me by surprise, and I realized it reflected the fact that, since DMSO costs so little, no one would ever be incentivized to promote it. So, as time continued, I gradually felt that I had a responsibility to do the best I could to present the case for DMSO as more and more readers began relying upon me to do so, and it was unlikely anyone else would undertake the full scope of what that endeavor to publicize the extensive volume of literature corroborating its use.

Unfortunately, as I dove into all the books that had been published on DMSO, I quickly discovered that almost all the books that had been written on the topic simply copied previous books in the genre (rather than independently researching the topic), and as such, the DMSO field was essentially unaware of most of the pertinent DMSO literature.

Note: similarly, there was immense variation in the DMSO protocols authors proposed, leading me to conclude that many were largely arbitrary and not extensively researched by the authors.

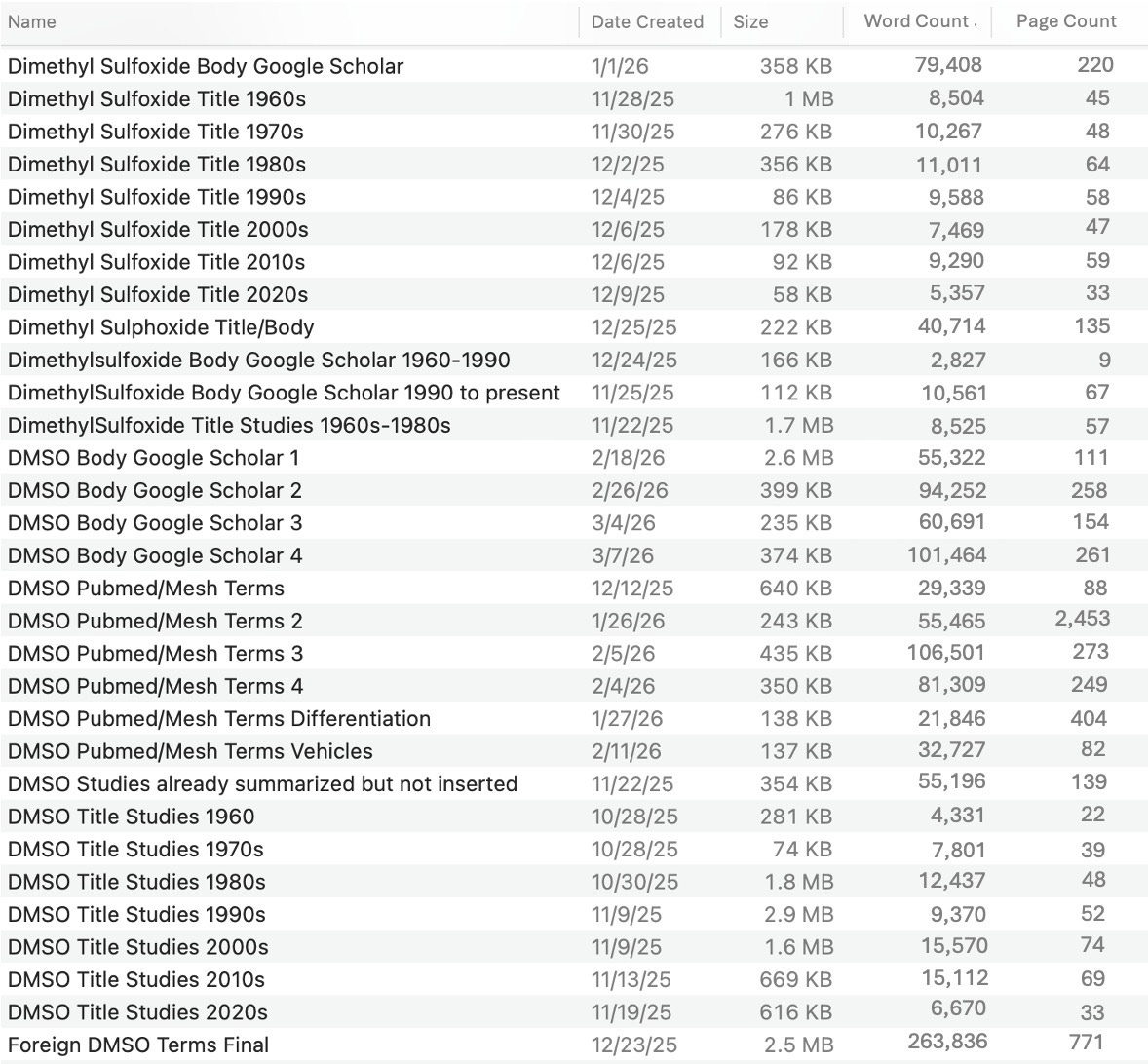

To some extent, this makes sense, as there is an overwhelming amount of literature on DMSO (DMSO has many different spellings, and many general searches for DMSO returned thousands, tens of thousands, hundreds of thousands, or millions of results). So, as I started scouring the databases, I gradually came up with ways to simplify the immense task of finding the pertinent studies (e.g., title-only searches in Google Scholar for DMSO/dimethyl sulfoxide/dimethylsulfoxide plus a condition would dig up many relevant studies).

However, it simultaneously dawned on me that because there were so many different permutations, the only possible way I would actually be able to find many key studies for DMSO would be to search each database for each phrasing used for DMSO, collate all of the relevant studies which came up in the process into a document, and then sort that document by each condition. For a while I went back and forth on if I wanted to do this, as it would take an immense amount of time to do (requiring me to cut off every non-essential commitment in my life and significantly reduce my output here), but as time went on, I felt more and more obligated to do it due to the unprecedented support so many of you had given the series.

So, eventually, on October 25th, after I finished the last DMSO article, I set a goal to complete this in two months and hoped that this gap would not be too disruptive to everything else. However, as I began that project, I gradually realized searches I previously thought were impossible could be done, and kept extending my timeframe while simultaneously trying as hard as I could to get things done as fast as possible without cutting corners (which was very challenging for my body, mind, and spirit). A few days ago, I essentially finished, and because of that, I felt I owed the supporters of this newsletter some accounting of where all that time had gone.

Note: in the “title” searches, the studies themselves are not yet summarized (that will be done later), whereas in the non-title ones, they have (as I needed to verify the articles contained pertinent DMSO information). Additionally, in the PubMed searches, I feel I need to re-read through about 30,000 results to flag some that were missed, as I used AI to flag that set (due to being too tired to manually read them), and I realized near the end that the approach I used missed some of the studies that should be flagged. Lastly, while I went through the main databases and most of the terms for DMSO, I know I missed some, which means even with this, there are still DMSO studies waiting to be discovered.

Additionally, one of the most challenging parts of this project was going through the Chinese database, as due to its numerous issues, I eventually realized the only viable option was to use a very rough filter of the 40,000 search results, then copy and paste each one’s title and abstract into a document, and then subsequently sort that document. Presently, that is the only remaining task, but it’s essentially done and will be this week.

Fortunately, while all of the previous was quite challenging, the next step I’ve been waiting months to do (searching for specific keywords so the studies can be moved to other documents and sorted) is infinitely easier and faster to complete.

Note: in tandem with this, another member of the team is also doing a similar process with the 6,000 reader DMSO reports that have been received, so they can be turned into an easily accessible reference for individuals curious as to how DMSO interacts with a myriad of medical conditions (as it can be used in so many areas, it simply is not feasible to study every application so a synthesis of reader reports represent the best source of data which can practically be obtained in this regard).

Artificial Intelligence

Like many, I have begrudgingly accepted that AI is a part of life and I need to learn how to use it effectively (whereas initially I resisted it because I did not like how using it diminished my cognitive capacities). More than anything else, I believe the most important thing is that writing is not the information you present, but rather the heart and intention behind it (discussed further here). As this is somewhat of a spiritual process, I believe it is unlikely AI will ever be able to replicate it. In turn, I do not like the way AI text sounds or feels, and hence feel quite strongly about not using it (despite its potential to save a lot of time). Similarly, many of the edits AI proposes, while “correct,” break the flow of what I’m trying to convey within the writing, so I am very adverse to it (as how writing feels is very important to me).

Concisely, my perspective is that the currently existing AI has a lot of value if you use it to help you complete a task (or find out how to complete a task), but if you rely upon it to do a task for you, it will frequently create issues that outweigh the benefits it provides. Put differently, completing a task often requires completing a sequential series of steps, and if you understand each step well enough to quickly see if they are being done correctly, AI can greatly help you on the time consuming steps, but at the same time, if you task it with doing sequential steps in a row to do a task for you, rather than assigning something much more concrete (e.g., a single step or process), errors are inevitable which are not acceptable if accuracy is required.

For instance, in this task, I quickly realized:

•AI could not find most of the studies I wanted (e.g., because it wasn’t familiar with most of the databases I wanted), but simultaneously, it was very good at compiling generalizations of things repeatedly studied in the literature (e.g., how DMSO at different concentrations typically affects cells).

•With a bit of work, AI could accurately extract and summarize DMSO pertinent information from large studies (e.g., turn a 30-page paper into a one paragraph summary). This is essentially what made the project possible, as there was no other way I could have reviewed millions of pages of DMSO-related literature.

•AI eliminates language and text recognition barriers, which otherwise made it prohibitively time consuming to ever review foreign studies.

•If you give AI a large volume of studies to filter for relevance, it is very difficult to have it have appropriate sensitivity and specificity (it either misses some or flags way too many), and this accuracy varies by the model. Because of this, I largely avoided doing this (instead manually reading through them) and primarily used this filtering on certain groups of foreign studies where it was otherwise prohibitively time consuming to go through them, and accepted a certain portion of studies being missed as a necessary trade off to finish the project. Likewise, in many cases, it was effectively impossible to functionally filter results, as you had to be familiar enough with the DMSO literature to know how DMSO was likely used in a study based on its title (if DMSO was not within the title).

•There are a lot of tricks that exist within word processors and databases, which made projects like this a lot easier to do, and generally, while there were issues with executing them directly, the AI systems were very good for telling you how to do them. For example, Google Scholar only lets you view the first 1,000 search results, so I had to find very creative ways to sequentially break up 4,130,000 results (what you get for searching it for “DMSO”) into relevant chunks that were under that cap and throughout the process, I needed to write small lines of code to facilitate certain steps which they showed me how to do and I could then tweak.

•There are a lot of things AI will reliably screw up, to the point you simply cannot assign those tasks to it. Nonetheless, if 10 steps need to be done and AI can do 3-4 for you, that still saves a massive amount of time.

In short, AI as a tool made this entire project (which would have otherwise been impossible for anyone to ever do) possible to do, but simultaneously, it still required a lot of work on my end, and given the constraints I ran into, I feel it will be a long time (if ever) that functional AI systems can replicate what I did here. I wish it were not that way (the last 5 months were miserable), but that’s just the way it is, and I’m primarily grateful there was actually a way to do this.

Note: throughout this process, I was constantly learning and changing how I approached this compilation. One of my major regrets was that the moment the war with Iran started, my mental bandwidth was stretched to the point it did not occur to me to immediately switch to going through all the remaining Iranian studies (by adding site:.ir to the search query), as shortly after, Iran’s internet was cut off, and it became impossible to access anything on their servers.

Furthermore, because of all the time I’ve spent trying to understand how to effectively use AI to facilitate this task, I’ve also had a lot of time to reflect on how I think AI will affect humanity, particularly with regard to cognition.

The Scalability of Governance

In almost every government, there is an inevitable tendency by those who rise to power to try to control every aspect of society they can get their hands on—even when those individuals are clearly wrong (e.g., consider how many clearly counterproductive and harmful COVID policies were continually pushed despite widespread public protest against them). As such, over the centuries, a variety of approaches have been adopted to stop government overreach such as constitutions, courts, and guaranteed rights, putting checks and balances in place to prevent any part of the government from becoming too powerful, making officials accountable to the ballot box, or directly arming the citizenry so they can resist tyranny.

However, I believe the most effective check on government overreach is simply the scalability of tyranny. For example, if two officers or soldiers were assigned to ensure a troublesome citizen always complied, that would most likely work, but would be impossible to implement on a large scale as it’s generally accepted that at most 5-10% of the population can be soldiers (before the economy collapses), whereas what I just described would require more than half of the population to be diverted to simply ensuring people complied. Similarly, while police can generally maintain law and order, once too many stop obeying them (e.g., during riots), things can rapidly scale out of control, and anarchy will emerge (something also seen when a government partially collapses).

In turn, my observation throughout history is that frequently, the thing that stops horrific policies from being implemented is not any ethical considerations by the ruling class, but rather, simply how feasible policies are. In contrast, the thing that made the totalitarian states of the 20th century so destructive and unprecedented was that technology no one was ready for, had recently emerged, making it possible to radically scale up mass social manipulation and genocide.

To illustrate, there has been a longstanding belief in the ruling class that, like wildlife managers, they have a duty to prevent the human population from becoming too large and overwhelming society’s resources, which has resulted in brutal forced sterilization or forced abortion campaigns (which the population understandably fought back against). Because of this, once injectable birth control became available, globalist organizations switched to this more feasible approach (e.g., on refugees) and then put decades of work into fervently developing a far more scalable form of population control—sterilizing vaccines (which were then pushed upon the developing nations).

Because of the scalability constraint, the ruling class has largely shifted to a passive model of control where:

•Economic incentives are put into place that force people to comply (e.g., many of the harmful or unnecessary practices in medicine ultimately originate from how the compensation model is set up—something we saw go into overdrive during the pandemic when hospitals were paid to push disastrous COVID protocols).

•Micromanaging the population was delegated to corporate employers (who could be controlled through economic incentives and, likewise whose workforce could be controlled by the economic necessity of having to tolerate an undesirable employer or submit to a dangerous vaccination).

•Using the limited enforcement resources of the government to make public examples of those who didn’t comply so the rest of the population would be frightened into compliance (which likewise was done to the doctors who dissented against the COVID protocols).

•Gradually create algorithmic systems to encourage compliance (e.g., social credit scores), and potentially digital currencies that can cut off people’s access to society.

•Have people be so busy and overwhelmed with their work and livelihood that they do not have time to do anything else, such as protesting a corrupt government (which I and many others believe is a key reason why so many things that could reduce the need for people to work or have effective, affordable healthcare are never implemented).

•Having the media continually distract and disorient the population so they were drawn away from doing anything that could challenge the system.

Unfortunately, AI effectively addresses this scalability issue, as rather than requiring the majority of the population to be soldiers, a handful of engineers can now manage a system that effectively monitors (and harasses) the population. This is a very worrisome possibility we’ve never had to deal with before, and was highlighted in one of RFK Jr.’s most controversial remarks:

“Even in Hitler’s Germany, you could you could cross the Alps to Switzerland. You could hide in an attic like Anne Frank did…the mechanisms are being put in place that will make it so none of us can run and none of us can hide.”

Similarly, one of the primary checks against war has been the need to have large numbers of soldiers to comply, as it’s typically not feasible to get a large portion of the population to fight a clearly unjust war as most human beings (regardless of how much you drug or condition them) do not want to kill others unless they truly feel they have to.

In turn, one of the major issues with AI is that it’s making it possible to kill on the battlefield without needing compliant soldiers. Presently, we are seeing this with drone warfare (which I believe the Ukraine war, to some extent, is being used to develop much in the same way Vietnam broke out a few years after the military decided they needed to develop helicopter gunship warfare). As such, I am extremely worried about the future that will be created once actual AI warfare (e.g., with robots) becomes viable, and if I could have one wish, it would be for an international treaty that outlawed it (which could potentially be justified under the need to avoid a Terminator scenario).

While this was long a hypothetical consideration, the fact that the knowledge gained from the Ukraine war is now being ported over to Iran (in lieu of soldiers being deployed to eliminate Iranian personnel in the streets), shows we are actually quite close to this future emerging. I find this particularly worrisome as the greatest atrocities in history typically occur when those who order the killing are disconnected from the act itself (e.g., Obama routinely signed off on drones to kill many people, including civilians, in the Middle East), and automated AI weapons put the entire process into overdrive to the point in may never be possible to put that genie back in the bottle.

Note: similarly, if the government can automate its policing activities, that opens the door to immense tyranny.

Government Efficiency

Bureaucracies by their nature are always inefficient and dysfunctional. On the one hand, this is a good thing, as it often ensures there is some way to hide from or evade it (e.g., via a legal loophole), but on the other hand, it can lead to immense waste, inefficiency, and inertia.

Elon Musk’s Department of Government Efficiency (D.O.G.E.), for example is making it possible to audit a wide range of government programs and identify the wasteful and unnecessary ones, something many have tried to do for years—but it simply was not feasible to implement as there was far too much data for a few assigned personnel to unravel.

While this new era exposes us to significant risks (e.g., D.O.G.E. is also sometimes cutting necessary programs, and the vast AI apparatus is taking away our ability to hide from the government), it is also making it possible to tackle many longstanding institutional problems.

For example, I have long believed one of the simplest ways to end bad medical practices would be to have AI systems analyze the electronic record data from large medical systems, as within minutes, they can complete analyses that would take researchers years to conduct (and then can be repeatedly tweaked to figure out what’s actually there). Unfortunately, over the years, I have met many people who were genuinely interested in pursuing this, but they all ran into roadblocks because the medical industry did not want the harms of their moneymakers being exposed.

In turn, one of the exciting ideas MAHA has brought forward is doing just that (e.g., using AI to compare all the health records of the vaccinated and unvaccinated) as this is a way to expose all the harmful and wasteful healthcare practices that have gone on for decades—particularly since the current administration is prioritizing eliminating wasteful spending. However, I should note that at this point, those systems are only being utilized to identify healthcare fraud (which is also a huge issue that needs to be addressed).

Likewise, one of the major problems we’ve faced for decades is that the monopoly on information that the mass media has wielded has made it impossible for the public to become aware of the policies that harm them and to mass-mobilize against those policies. However, the scalability of information transfer on the internet (and social media) has made it possible, essentially breaking that monopoly and giving rise to an unprecedented political climate where new and controversial ideas can rapidly go viral (particularly when honest algorithms highlight the information people actually want to know).

Note: having watched the global media landscape for decades, it’s hard for me to even begin to describe how profound and unprecedented the change Twitter (𝕏) has created is, as stories that previously would never see the light of day rapidly become national headlines and false narratives (typically) die in hours rather than persisting for months.

Lastly, while writing this article, I was reminded of a famous speech by Charlie Chaplin (the world’s most famous actor at the time), delivered in his 1940 film The Great Dictator, which he made to combat the rising tide of fascism and totalitarian across Europe—leveraging his striking physical resemblance to Hitler. The movie culminates with him warning against surrendering to “unnatural men—machine men with machine minds and machine hearts,” in a speech that has stayed with me ever since because of its uncanny parallels to our own era (underscoring history’s cyclical patterns).

The Future of Workers

If you track the course of history, the upper class has typically tried to hoard most resources for themselves and then shared only the excess they did not need with the population, or the bare minimum required for the working class to continue producing wealth for the upper class.

The recent era we were in (made possible by America’s intact infrastructure, well positioned to capture the post-World War 2 boom) was the wealthiest in humanity’s history. In recent decades, we’ve gradually been transitioning back to an era of vast wealth inequality where the upper class hoards all the society’s wealth and everyone else just scrapes by.

Typically, one of the main bulwarks against this exploitation is that to some extent, the upper class needs everyone else to work for them to generate the wealth they consume, so workers can’t be pushed too far (or they will revolt against the system).

AI in turn, changes this paradigm as any jobs that previously required human workers (e.g., document analysis or picking berries in a field) can be outsourced to AI systems (e.g., Tesla’s robots have a real shot of upending the economy within a few years).

Since many workers will no longer be needed, many people I’ve spoken to (including a few fairly influential people) are immensely worried that the ruling class is beginning to seriously consider reducing the population, particularly since we are at a time where the world’s population is undergoing great stress (e.g., due to the rapid and overwhelming transformation of life being created by the digital age), and times of great stress within society typically coincide with large wars breaking out (especially if there’s a pre-existing “need” to reduce the population or restore order).

As such, many of us believe that it’s critical:

•Individuals train themselves in fields that cannot easily be outsourced to AI (e.g., by becoming the master of a craft and by doing everything they can to maintain their lifelong creativity) and finding ways to be self sufficient so they are not dependent upon the monopolistic systems we are more and more controlled by.

•The consciousness of our society shifts (e.g., increased critical thinking) so that we cannot be manipulated into following harmful agendas (which fortunately is being made possible by platforms like Twitter).

•Our societal viewpoints are shifting to valuing the important things in life (e.g., being connected to others, respecting and cherishing life, being in nature rather than immersed in technology, or embodying a genuine spiritual faith) as this way of living is the antithesis of the sterile and dehumanizing future being pushed upon us.

Note: Elon Musk’s belief is that AI, space exploration and robotics can usher in a new era of prosperity for society that will eliminate many of the conflicts we’ve previously had over limited resources. While this is possible, I think it is vital to be prepared for the less optimistic scenario before it is too late to do so.

Artificial Thinking

I’ve long believed that one of the primary issues in our society is us being conditioned by the educational system that we “need to be taught to learn,” as this transforms education from an enjoyable active process to an unpleasant passive one that greatly diminishes both our ability to learn and think creatively (along with being conditioned to believe one needs a doctor to be healthy)..

Note: most of what I know was self-taught, as I realized early on that formalized education was taking away my ability to think.

One of the major problems with this model is that not only is the information we are taught biased, but the way we are taught to think is as well (e.g., we are encouraged to skip understanding the context behind a topic so we can cram the essential material for tests and to prioritize copying algorithms rather than independently coming up with a way to solve problems).

Note: this topic and how to effectively study are discussed here.

This issue has infected science and resulted in a large amount of erroneous (e.g., non-replicable) data being published that simply exists to support existing dogmas or pharmaceutical products rather than get us closer to understanding the universe (which for example is why discoveries that revolutionize science are getting much rarer).

In parallel with this, there has been a massive push to both eliminate undesirable information from the internet and create a very specific way of thinking online (e.g., blindly trusting “the science”), best embodied by astroturfed websites like Reddit. Because of this, as the years have gone by, I’ve noticed it’s become harder and harder to find the information I’m looking for (it essentially disappeared from all the standard channels) and that I’m frequently forced to navigate extremely biased platforms (e.g., Wikipedia) to find what I’m looking for.

Since I used the “old internet,” I know what used to be out there (and hence how to find it) and have an intrinsic sense of what types of biases I need to filter for in each type of information source I look through. As these are skills which I believe are nearly impossible for anyone to learn who was not on the “old internet,” I hence am quite worried that much of that will never be recognized by the generation who was raised on smartphones.

Note: this is somewhat analogous to how human beings used to be much healthier, but over the last 150 years, there’s been a gradually increasing epidemic of chronic and unusual diseases alongside many natural medical therapies becoming much less effective, which is a result of the unnecessarily toxic environment modern technology is creating.

AI and Cognition

I strongly believe that the things you learn at a young age are what you retain best for life, and in my case, I was very fortunate to have a strict 3rd-grade teacher who pushed me in mathematics to the point that I could do long division by the end of the year. A few years later, calculators became available in schools, and I noticed that when I used them, my ability to do math declined significantly. For this reason, I largely avoided using calculators (as I wanted to maintain a concrete awareness of numbers), and similarly, much later when GPS and cell phones became available, I tried to avoid relying upon them as I found they greatly reduced my ability to remember phone numbers or have an innate sense of direction that allowed me to find where I wanted to go.

Note: in contrast, I have seen people who quite literally have panic attacks when they can’t have a GPS guide them to where to go, even if it is very close to their location.

All of this, in turn, touches upon a common neurological phenomenon; cognitive processes you reinforce are strengthened, whereas ones you neglect atrophy (e.g., blind people frequently develop very advanced usage of their other senses, such as one individual, the bat man, who discovered how to echolocate, to the point he could ride a bike).

Note: the existing Alzheimer’s research shows that one of the primary contributing factors is individuals not fully exercising their brains. Worse still, since those who are cognitively impaired tend to rely more on external cognition aids (e.g., AI), the new paradigm will likely lead to a downward spiral that greatly accelerates that decline.

In my case, I feel the reason I can use AI effectively is because I understand what I am trying to do, how to do it, and maintain an awareness of each step that is required, so I can then recognize which parts of the process just make more sense to offload to AI while I have time to do the rest. Likewise, because I have a deep familiarity with the types of biases that exist in information the internet (e.g., I know on certain topics, whatever is presented will be a lie or heavily slanted in one direction) I can immediately recognize when those same biases are present in AI and can instinctively utilize similar filters to what I learned when browsing through the general internet.

In contrast, I feel neither of those will be applicable to people who grew up with AI (so they do not have a pre AI baseline) or those who chose to have AI think for them so that they do not have to do “hard work” and develop those cognitive skills, and worse still, will be particularly detrimental for children who miss that critical window at the start of life to develop their own cognition because they relied upon AI to think for them. Furthermore, I feel that, to some degree, this is unavoidable, as society constantly places pressure on students to succeed academically in the immediate future rather than prioritizing what is necessary for long-term cognitive development.

Two recent studies gave excellent proof of this concept.

The first, “Creative scar without generative AI: Individual creativity fails to sustain while homogeneity keeps climbing,” showed that AI was improving academic output and leading to far more quality studies being published, but the content became much more homogenous (repetitive, “machine like” and uncreative). More importantly, once AI was withdrawn from (now “dependent”) users, their academic output dropped significantly, and their content homogeneity continued to worsen over time, resulting in them being much less creative than they had been before they started. I feel this is concerning, as beyond permanently impairing the academics, it also will gradually pollute the entire scientific literature base (which in turn will shift AI to promoting even more rigorous non-creative conformity).

The second, a four month study of university students tasked with writing open-ended essays, found individuals who did not use AI or Google searches had much higher activation of neural circuits throughout the brain, while AI users had the worst, particularly in areas to memory, executive function, and creativity (e.g., ~55% lower in some metrics), which worsened as more AI was used (leading to more and more boring repetitive writing structures). Furthermore (after up to four months of follow-up), users who began with AI subsequently performed significantly worse when tasked not to use AI, had difficulty recalling what they wrote in “their essays,” and were much less happy about the process.

In my eyes, all of this leads me to believe that AI is going to be another thing that increases the wealth stratification in our society, as those who understand how to use it as an effective tool (while simultaneously preserving their cognitive function) will be immensely successful and be able to greatly scale up their productivity. Conversely, for the typical user, AI will atrophy many of the skill sets they previously had that protected them from exploitation, while simultaneously weaving in a variety of subtle thoughts that directly exploit users for someone else’s benefit.

Note: one of the most annoying things I have found with AI systems is that they will continually “lie to you” (or give inaccurate answers) to make you happy for increased engagement, and the experience I’ve gone through feels very similar to what many attractive women experience where men (desiring them) will continually give them unwarranted flattery, which initially is incredibly appealing, but as time goes on, makes you get fed up fed up with all the fake disingenuous comments and just desire people who will be real with you. As such, my hope is lots of men being forced to go through this with AI will allow them to relate to what women routinely experience, but unfortunately, at this point, the majority of people I talk to are enamored with the continual compliments and empathy AI provides them (leading to the situation where many report preferring AI to a therapist or friend and routinely seeking it out for advice on critical life decisions). This is unfortunate, as it again sets people who do not think independently and critically up to be easily manipulated by Silicon Valley algorithms. That all said, I admit I find myself periodically saying please and thank you to AI systems when I get really good results (in part out of gratitude and in part because I don’t want to train myself to be callous and entitled towards people helping me), even though the founder of ChatGPT (and others) have explicitly stated people doing that costs them money and leads to worse AI outputs.

Navigating Artificial Intelligence

Because of all of this, I feel AI is something which can be useful if it’s an adjunctive tool you utilize when it’s appropriate, but if it becomes your primary aid, its harms quickly outweigh the benefits it can provide (something which I believe is analogous to how I feel about pharmaceuticals in medicine—as while frequently unsafe and ineffective, if a doctor does not rely upon them, they can recognize the instances where they will clearly benefit patients).

In the final part of this article (which primarily exists as an open forum for you to ask your remaining questions), I will discuss:

•Which AI systems I have found to be the most useful for different types of situations I encounter (as their value varies greatly depending on what each is used for), and how I specifically utilize them, along with how you can use them to solve major longstanding challenges in natural medicine.

•My preferred resources for combatting the great vanishing of information and finding the forgotten medical information I’m looking for (e.g., how I locate many of the hard to find medical references online).

•Now that I have reviewed the entire literature base, which of the currently existing DMSO books do I consider to be the best for those looking to learn more about the subject (a lot of people have asked about this).